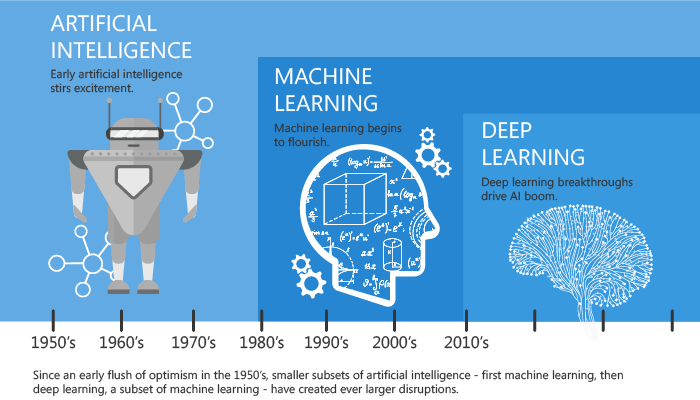

Artificial Intelligence (AI) is becoming a hot topic for mass media, despite being born in the 50s. From the beginning it has followed two main directions: the first based on logical / mathematical models following the scientific method initiated by Galileo Galilei that started the western development of the last centuries, while the second is built on what we know about human mind working mechanism, hence the term “neural networks” and, more recently, “deep learning”. The practical progress of these two strands of research has been rather disappointing for decades, especially for neural networks. Compared to the competing approach, it requires much more data and computing power for the preliminary model synthesis phase, called Machine Learning (ML). This caused a “long AI Winter” in which research was mainly relegated to academia and with limited practical applications.

Why now?

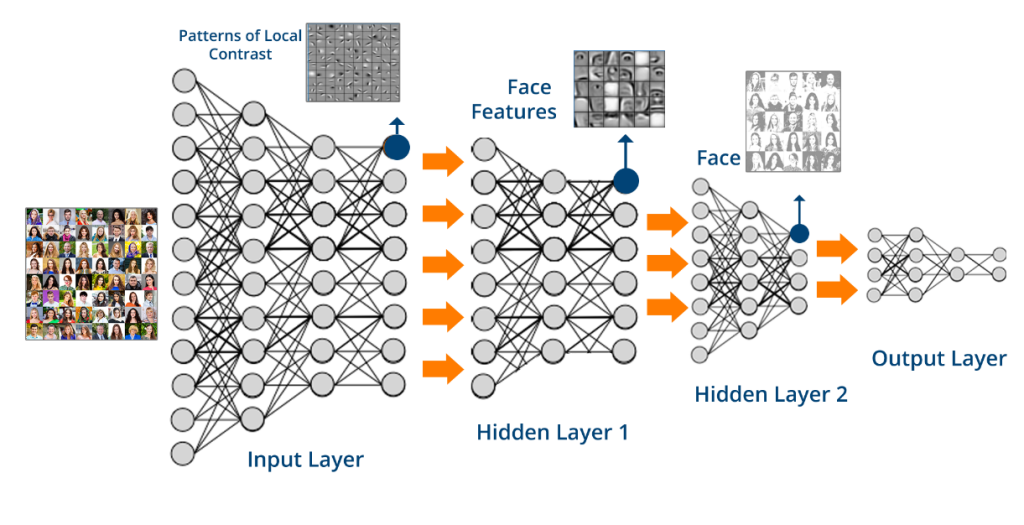

The recent “AI Spring” is due to Cloud Computing and its data abundance for ML “feding” and the enormous computing power available to anyone who knows how to use it. The first to flourish was the logical / mathematical strand, but shortly after the “Deep Neural Network” (DNN) generated the most striking results, called “deep” because between the input and output nodes are inserted intermediate levels, each one specializing on a different aspect, multiplying its complexity.

If the model synthesized by a logical / mathematical approach can be reduced to an equation, for DNNs it consists in several matrices with a number of parameters, to be optimized by ML and evaluated for execution, in the order of millions or even billions.

The leap forward in computing vision that led, for example, to the recognition of a client’s gender, age and mood or understanding the sense of entire sentences to establish useful man-machine conversations, possibly with automatic translation between two languages, are only examples of a price / performance ratio that were difficult to predict 10 years ago.

The success is due both to scientific progress and to the innovative use of special HW like GPUs (Graphical Processor Unit), designed to handle video in PCs because it can process limited subsets of instructions much faster and efficiently than traditional CPU (Central Processing Unit).

Even in sales forecasts, the most natural fields for logical / mathematical models, the neural trend is spreading with variants specialized in the analysis of temporal sequences called LSTM (Long Short Term Memory).

In the main geopolitical areas of the world, AI is regarded as the main catalyst of the current Fourth Industrial Revolution. US was the pioneer, recently reached by China, at least in terms of investment. In this race, similar to the one for space between US-USSR of the last century, China is applying its direct control over large technological companies and inhabitants who, by their daily behaviour, provide the raw data for AI algorithms. Other geopolitical areas are very behind and likely to suffer the US-China hegemony.

Widespread apprehensions about the future of work and our civilization have arisen in Western public opinion. With regard to employment, since the human mind and the AI have complementary strengths and weaknesses, the best path seems human-machine collaboration, avoiding exasperated automation, often even uneconomical. About long-term impacts, we should not forget that negative sides usually obtains the greatest media coverage. Nevertheless, being the most powerful technical instrument invented up to now, its use requires an ethical / philosophical vision and a political practice in which Europe can say a lot.

Only in the Cloud?

While the Cloud remains the best environment for the learning phase, especially for DNNs, execution is moving to the edge according to “Edge and Fog Computing” trend, where the first term identifies the concept and the second technology. Bringing processing as close as possible to the point where data is generated and used, drastically reduces latency (the delay between sending data and receiving the result) and the volume of data moved through the internet. With the expansion of IoT (Internet of Things) reaction times, saturation of communication channels and the possibility of working even disconnected are increasingly essential.

Recent technical standards, including Cafè, TensorFlow and ONNX (Open Neural Network Exchange Format) help DNN models to find their way into smartphones and even less powerful IoT devices. In addition, the latest version of Windows 10 introduced new programming interfaces to integrate AI models into PCs and tablets, allowing transparent use of GPUs where available.

Amazon and Microsoft are putting on the market cameras with AI on board that will be very useful in Retail. A year ago, I conjectured that the reason for the initial problems of Amazon Go (the first supermarket without cashiers) could be the use of cameras with centralized AI in the Cloud http://akite.net/en/news/what-may-have-gone-wrong-in-the-first-amazon-go-tests

Are Deep Neural Networks only for multinationals?

The availability of huge volumes of data and computing power, in addition to almost all the brightest minds in circulation, favours the few large multinationals that have been investing for years in AI.

Nevertheless this is not preventing small specialized companies from using DNNs in vertical sectors through what is called model customization. Several IT giants are beginning to provide developers with ready-made models and simple tools to refine the learning process through few specific examples.

In computer vision this customization process requires only tens or hundreds images specific to the application, skipping the analysis of millions of generic ones. In voice recognition, it means skipping the processing of years of speech and concentrate on few minutes of domain specific words and/or geographical accent. It is like, instead of teaching a baby to perform a work task, this is taught to a young person who has already developed his mental faculties and has completed a normal course of study.

Conclusion

The answer to the initial question is positive: neural networks are currently in clear advantage. But since the 50s the popularity of different algorithms and approaches to AI have often reversed, it could happen again. The end of the game is still far away.

Archivio

35010 Vigonza (PD)

P.IVA/C.F: 02110950264

REA 458897 Cap.soc. 50.000€

Software

Blog

© Copyright 2023 aKite srl – Privacy policy | Cookie policy